Study: Computer Detects Skin Cancer As Accurately As Dermatologists

A new study, published in Nature, suggests that computers can be trained to identify skin cancer moles as accurately as a dermatologist using images. The Stanford researchers hope that this level of research can be portable via cellular phone app to help patients diagnose skin cancer for themselves.

Dermatologists determine if a mole or skin abnormality is cancerous or not by looking at it. Sometimes, the doctors would request a biopsy, or sample cut of the tissue, to confirm the diagnosis. Lead author of the study, founder of research and development lab Google X, and an adjunct professor at Stanford University sought to create an “automated dermatologist” that would identify skin cancer at first glance by modifying a deep learning computer system.

"Our objective is to bring the expertise of top-level dermatologists to places where the dermatologist is not available," said Thrun.

Rather than building an algorithm from scratch, the researchers used an algorithm developed by Google that was already trained to classify 1.28 million images from 1,000 categories like cats and dogs.

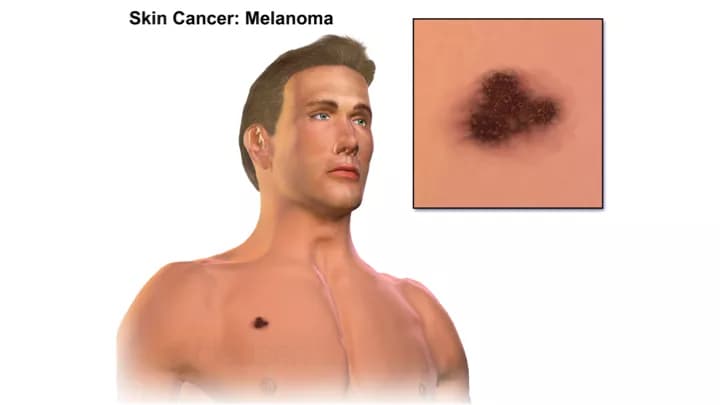

According to the Centers for Disease Control and Prevention (CDC), skin cancer is the most common cancer in the most common type of cancer in the United States. Melanoma is the deadliest form of skin cancer and is caused by prolonged exposure to ultraviolet (UV) light, either from the sun or artificial sources like tanning beds. Melanoma accounts for nearly three-quarters of all skin cancer deaths, despite representing less than five percent of all skin cancers in the United States. The five-year survival rate for melanoma is 99% if the disease is detected early. However, the survival rate plummets to 14 percent at its latest stage of cancer.

Differences between artificial intelligence and human intelligence

"What's really powerful about computer vision and these convolutional neural nets is, all you have to do is specify the input and output, and the machine will learn its own rules how to do that automatically," says Carl Vondrick, a Ph.D. candidate at MIT's Computer Science and Artificial Intelligence Lab, who was not involved in this study. "It learns kind of a series of mathematical transformations to essentially transform an image into an answer (to the question) 'Is there skin cancer or not?' "

Vondrick states that an important distinction between computer visual systems and the human visual system is that humans can recognize patterns from small amounts of data. On the other hand, computers require thousands to even billions of samples to classify with the same accuracy. This study used about 130,000 images of skin lesions representing more than 2,000 different diseases. Computer visual systems may detect subtle differences within digital photographs unnoticed by the human eye over time.

Thrun still strongly recommends real testing in a clinical setting is needed, but they believe this type of research can be expanded to other areas of medicine, such as ophthalmology, radiology, and pathology.

Similar research was published in Nature by researchers at the University of Illinois at Urbana-Champaign in September 2016. Their research trained computers to detect malignant prostate cancer.

References:

- Sebastian Thrun et al. Dermatologist-level classification of skin cancer with deep neural networks. Nature, January 2017 DOI: 10.1038/nature21056

- Skin Cancer. (2016, September 28). Retrieved January 26, 2017, from https://www.cdc.gov/cancer/skin/

- Sridharan, S., Macias, V., Tangella, K., Melamed, J., Dube, E., Kong, M. X., ... & Popescu, G. (2016). Prediction of prostate cancer recurrence using quantitative phase imaging: Validation on a general population. Scientific Reports, 6. DOI: 10.1038/srep33818

Related Articles

Test Your Knowledge

Asked by users

Related Centers

Related Specialties

Related Physicians

Related Procedures

Related Resources

Join DoveHubs

and connect with fellow professionals

0 Comments

Please log in to post a comment.